Key Takeaways

- Wikipedia serves as a high-stakes environment for testing AI's ability to maintain factual accuracy.

- The "Human-in-the-Loop" model is essential to prevent AI-generated hallucinations from polluting public knowledge.

- AI excels at structural drafting and sourcing, while humans remain superior at nuance and ethical judgment.

- Collaboration efficiency depends heavily on the interface and the level of trust the human editor has in the AI.

Why Wikipedia is the Perfect AI Sandbox

When scientists want to study how humans work with AI, they need a task that is complex enough that a machine can't just solve it instantly, but structured enough that they can measure the results. Wikipedia is that spot. Unlike a private document, a wiki page has a public history. Every single change, every deleted comma, and every added citation is recorded. This creates a goldmine of data for researchers. In these studies, the "editing task" usually involves updating an outdated article or creating a new one from a set of raw sources. When an AI is introduced, it doesn't just replace the human; it acts as a partner. Researchers look at things like "edit distance"-how much of the AI's suggestion did the human actually keep? If a human deletes 90% of what an AI wrote, the collaboration is failing. If they tweak a few words and keep the structure, the AI is providing genuine value.The goal here isn't just to see if the AI is "smart." It is to see if the human becomes lazier or more critical. There is a known phenomenon called "automation bias," where people start trusting the machine even when it is clearly wrong. By using Wikipedia's strict verification standards, researchers can track exactly when a human catches an AI's mistake and when they let it slide.

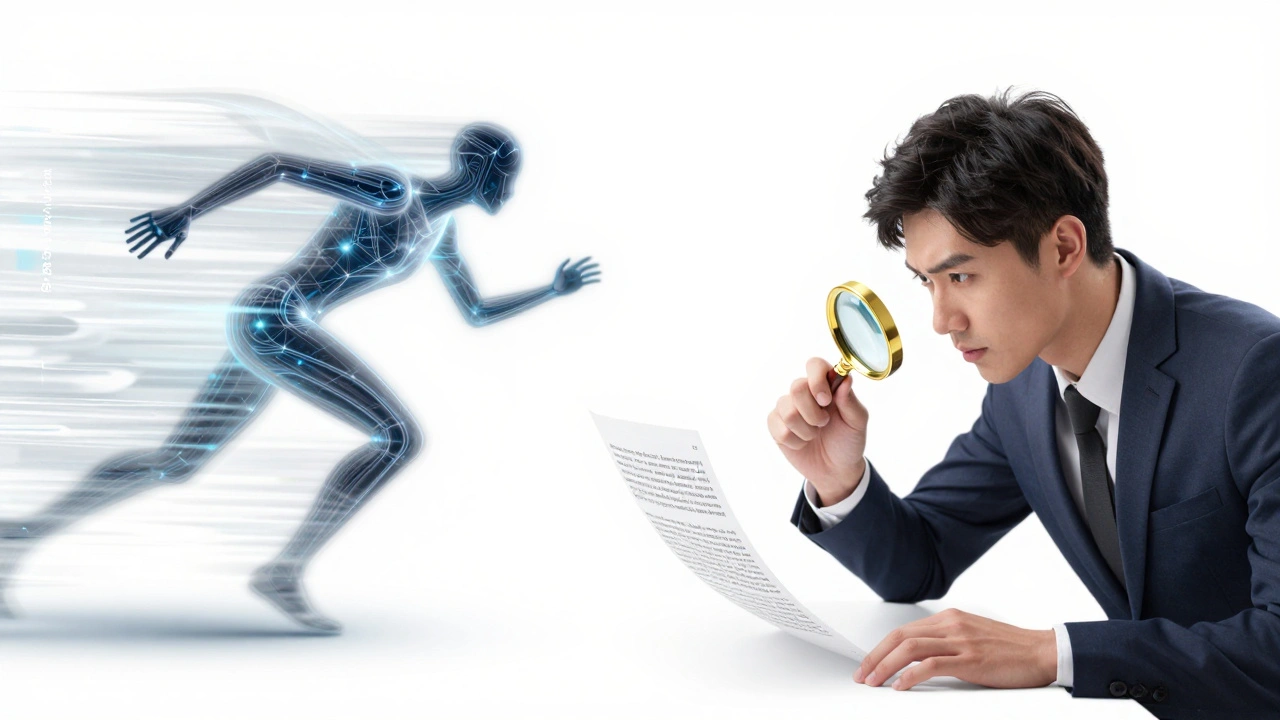

The Tug-of-War Between Speed and Accuracy

One of the biggest findings in human-AI collaboration studies is the trade-off between efficiency and truth. When humans use Large Language Models (or LLMs) like GPT-4 or Claude to draft sections, the speed of production skyrockets. A task that once took a seasoned editor three hours might now take thirty minutes. But this speed comes with a hidden cost: a higher risk of "hallucinations." In a recent experimental setup, editors were asked to summarize complex academic papers into a Wikipedia-style format. Those working alone were slow but accurate. Those using AI were incredibly fast, but they occasionally integrated fake citations-citations that looked real but pointed to non-existent papers. The most successful collaborators weren't the ones who trusted the AI the most, but the ones who treated the AI as a "suggestible intern." They used the AI to organize the data and then spent their saved time rigorously double-checking the facts.| Metric | Human Only | AI Only | Human-AI Hybrid |

|---|---|---|---|

| Drafting Speed | Slow | Instant | Fast |

| Factual Precision | High | Variable (Hallucinations) | Highest (Verification) |

| Stylistic Consistency | High | Medium | High |

| Source Integration | Tedious | Fast but Risky | Optimized |

Breaking Down the Human-in-the-Loop Process

To make these collaborations work, researchers focus on a concept called Human-in-the-Loop (HITL). This isn't just a fancy term; it is a specific workflow where the AI is never the final decision-maker. In Wikipedia tasks, this usually follows a three-step cycle: Generation, Critique, and Refinement. First, the AI generates a draft based on provided sources. In this phase, the AI handles the "grunt work"-summarizing long texts and formatting the data into the standard wiki layout. Second, the human enters the critique phase. This is where the critical thinking happens. The human checks the AI's claims against the actual sources. They might find that the AI misinterpreted a nuance in a scientific paper or used a tone that is too promotional (which violates Wikipedia's "neutral point of view" policy). Finally, the refinement happens. The human rewrites the problematic sections and approves the rest. The interesting part for researchers is how this cycle changes over time. After a few hours, some humans start to trust the AI more, which can be a good thing (increased flow) or a bad thing (decreased vigilance). The sweet spot is "calibrated trust," where the human knows exactly where the AI is likely to fail and focuses their energy there.

The Role of Interface Design in Collaboration

It is not just about the AI's brain; it is about how the AI communicates. Studies have shown that the way an AI presents a suggestion drastically changes how a human edits it. If an AI says, "I am 100% sure this is the correct date," humans are less likely to check it. However, if the AI says, "I think this is the date, but you should check the source," the human is much more likely to verify the fact. This is called "uncertainty signaling." Researchers have experimented with different interfaces for Wikipedia editing. Some use a side-by-side view where the AI highlights the specific sentence it is unsure about. Others use a "chat-integrated" editor where the human can ask the AI to "find a better source for this claim" in real-time. The results consistently show that when the AI acts as a tool that asks questions rather than a machine that gives answers, the final quality of the Wikipedia article is higher. It keeps the human in a state of active cognition rather than passive acceptance.Overcoming the "Knowledge Erosion" Risk

There is a darker side to this collaboration that researchers are deeply concerned about: knowledge erosion. If we rely too heavily on AI to summarize and edit our collective knowledge, we might lose the ability to understand the deep complexities of a topic. Wikipedia is built on the idea that the act of editing is a learning process. When you manually synthesize five different sources to write a paragraph, you are learning the subject. If an AI does that synthesis for you, and you just click "approve," you've bypassed the learning phase. Some studies suggest that while the *output* (the article) remains high-quality, the *editor's* internal knowledge of the topic is significantly lower compared to those who wrote it manually. This suggests that for AI to be a true partner in education and knowledge building, it needs to be designed to challenge the human, not just serve them. For example, an AI could ask the editor, "Why do you think this source contradicts the previous one?" instead of just merging the two facts.

The Future of Collaborative Knowledge Bases

As we move toward more advanced Natural Language Processing (or NLP), the line between the author and the tool will blur further. We are seeing the rise of "co-pilots" for knowledge management. In the context of Wikipedia, this could mean AI that doesn't just write text, but actively monitors the entire encyclopedia for contradictions. Imagine an AI that flags a conflict between a page on "Quantum Physics" and a page on "Linear Algebra" and invites a human expert to resolve the discrepancy. This shifts the human's role from "writer" to "curator" and "arbitrator." The expertise moves from the ability to draft a clean sentence to the ability to judge the truth and ethical implications of information. The studies conducted on Wikipedia are essentially a blueprint for how we will handle all digital information in the future. Whether it is a medical database, a legal archive, or a corporate wiki, the lessons remain the same: AI provides the scale, but humans provide the soul and the truth-check.Why use Wikipedia for AI studies instead of private documents?

Wikipedia provides a transparent, public record of every edit. This allows researchers to see exactly how a human changed an AI's suggestion, providing a clear trail of evidence that is impossible to get from private files. Additionally, Wikipedia's strict rules on neutrality and sourcing provide a standardized benchmark for measuring AI accuracy.

What is "automation bias" in the context of AI editing?

Automation bias is the tendency for humans to trust a suggestion from an automated system even when it contradicts their own senses or knowledge. In Wikipedia tasks, this happens when an editor accepts a factually incorrect statement simply because the AI presented it confidently, leading to the accidental introduction of errors into the encyclopedia.

Can AI completely replace human Wikipedia editors?

Currently, no. While AI is excellent at summarizing and formatting, it lacks the ability to truly understand context, ethics, and the nuanced social consensus required for many Wikipedia articles. AI can hallucinate facts, whereas human editors provide the critical verification and ethical judgment necessary to maintain a reliable knowledge base.

What is the "Human-in-the-Loop" (HITL) model?

HITL is a design pattern where an AI system performs a task but requires human intervention at key stages to ensure quality and accuracy. In editing, the AI might draft a section, but the human must review, critique, and approve the content before it is published. This prevents the AI from acting autonomously and making unchecked errors.

How does "uncertainty signaling" help human editors?

Uncertainty signaling is when an AI explicitly tells the user it is unsure about a piece of information (e.g., "I'm 60% sure about this date"). This triggers a higher level of vigilance in the human editor, making them more likely to double-check the source and reducing the chance that a hallucination makes it into the final text.

Next Steps and Practical Applications

If you are looking to apply these findings to your own work-whether you're a student, a professional writer, or a business owner-here is how to handle AI collaboration effectively:- Adopt the "Skeptical Editor" Persona: Never treat an AI output as a final product. Treat it as a first draft from an enthusiastic but unreliable source.

- Focus on Source Verification: Spend more time checking citations than rewriting sentences. The AI can handle the prose; you must handle the truth.

- Use Iterative Prompting: Instead of asking for a full article, ask for an outline, then a section, then a critique of that section. This keeps you engaged in the process.

- Audit Your Trust: Every few pages, intentionally look for a mistake. This keeps your "critical eye" sharp and prevents automation bias from setting in.