When a major crisis hits-like a natural disaster, political upheaval, or a global health emergency-rumors spread faster than facts. Social media explodes with claims, videos, and eyewitness accounts. But millions of people turn to Wikipedia for clarity. And somehow, despite being edited by volunteers, Wikipedia rarely becomes a vector for misinformation. How does it pull that off?

Wikipedia doesn’t wait for the news to break

Wikipedia doesn’t have reporters on the ground. It doesn’t have a newsroom. But it does have a set of rules that kick in the moment something starts trending. The key is verifiability. If a claim can’t be backed up by a reliable source, it doesn’t go in. Not even if 10,000 people are sharing it on Twitter.

During the 2020 Beirut explosion, false reports claimed the blast was caused by a missile strike. Within minutes, editors noticed the spike in edits trying to insert that claim. They didn’t delete the edits-they locked the page. Then they waited. Hours later, major news outlets like Reuters and BBC confirmed the cause: a fire at a warehouse storing ammonium nitrate. Only then was the article updated. The false claim? Gone. No trace left.

Reliable sources are the gatekeepers

Wikipedia’s policy on sources is strict. It accepts only sources that have editorial oversight. That means newspapers, academic journals, government reports, and major broadcast networks. It rejects blogs, tweets, YouTube videos, and forums-even if they’re popular.

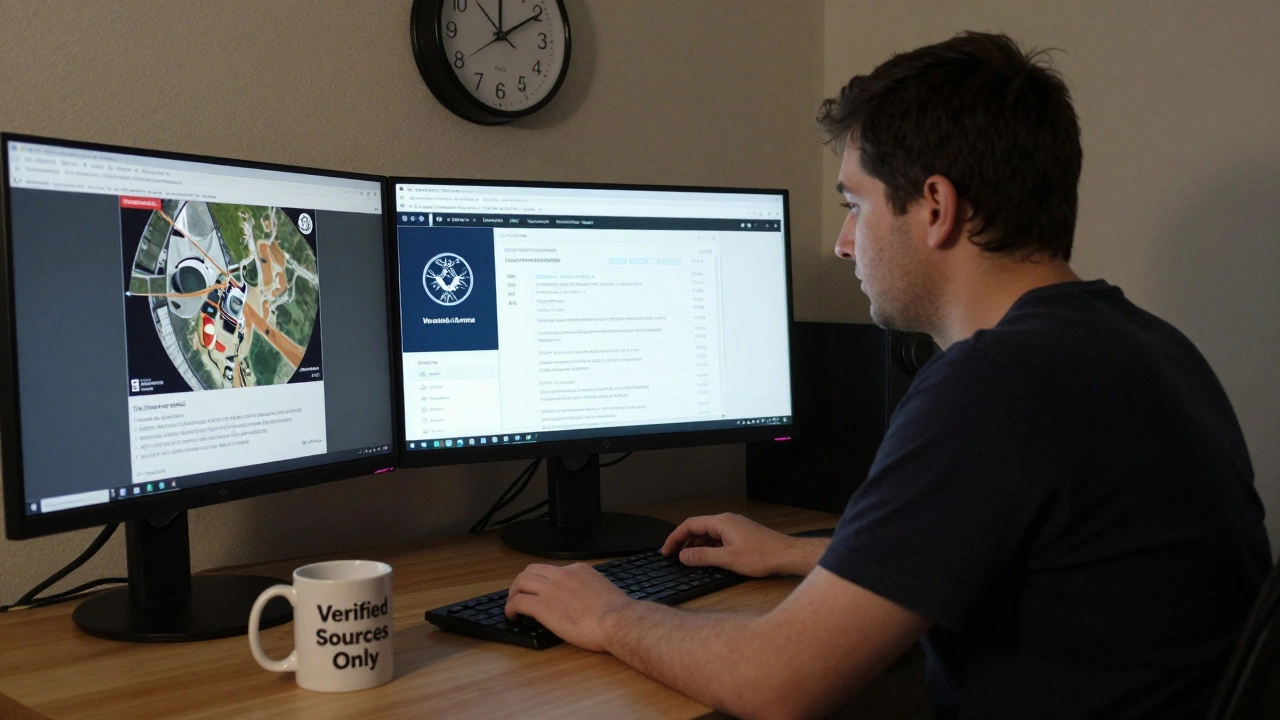

During the 2023 Turkey-Syria earthquake, a video went viral showing a collapsed hospital. Many assumed it was from the main quake. But Wikipedia editors checked the metadata. The video was from a 2016 earthquake in Nepal. They flagged it. The edit was reverted. A note was added to the talk page explaining why. That note stayed visible for weeks, helping future readers avoid the same mistake.

Wikipedia doesn’t care if something is trending. It cares if it’s documented by a source that has a track record of fact-checking. That’s why you won’t find a Wikipedia article saying “President X resigned” just because a meme says so. You’ll find it only after three major outlets report it.

Conflict pages get special treatment

Not all pages are created equal. When a crisis involves conflict-war, protests, or political violence-Wikipedia locks down the most sensitive pages. Editors use a feature called “semi-protection” or “full protection.” That means only experienced editors can make changes. This isn’t censorship. It’s damage control.

In 2024, during the Israel-Hamas conflict, over 200 Wikipedia pages were protected. One of them was the article on “Gaza Strip casualties.” For weeks, edit wars raged. Proponents of different narratives tried to insert numbers that weren’t verified. The system didn’t pick a side. Instead, it showed the numbers from the UN, WHO, and Red Cross-along with footnotes explaining where each figure came from. The page became a neutral archive of verified data, not a battleground.

The “pending changes” safety net

Wikipedia has a feature called “pending changes.” It’s like a holding area for edits. New or unregistered users can still suggest changes, but those changes don’t go live until a trusted editor reviews them. This is used heavily during crises.

When a plane crashed in Washington, D.C., in January 2025, rumors flew: “All passengers died.” “It was a drone strike.” “The pilot was drunk.” Within 15 minutes, the article was put under pending changes. For the next 72 hours, every edit had to be approved. Editors checked FAA reports, NTSB statements, and live press briefings. False claims? Blocked. Verified updates? Published with sources.

This system isn’t perfect. It can be slow. But it’s designed to be wrong before it’s fast. And that’s the point.

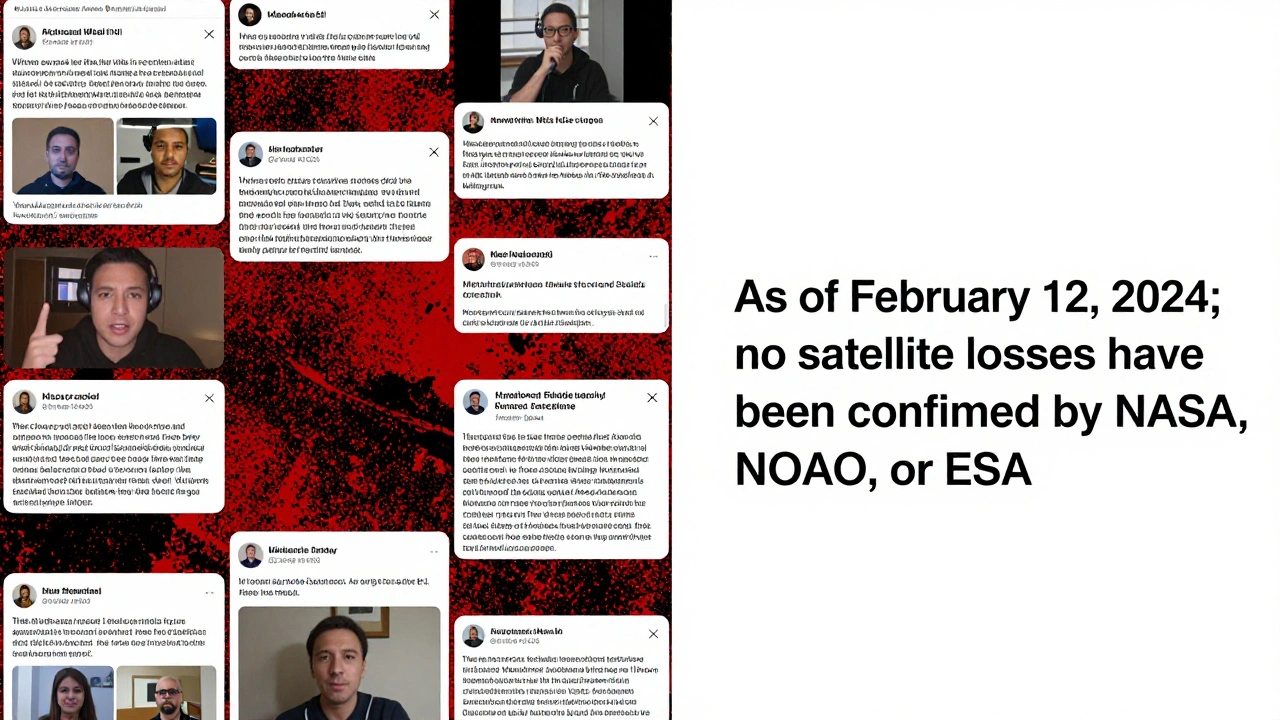

What happens when no source exists?

Sometimes, during a fast-moving crisis, no reliable source has confirmed anything yet. What then?

Wikipedia doesn’t leave the page blank. It doesn’t make up answers. Instead, it uses a simple, honest phrase: “As of [date], there are no confirmed reports.”

During the 2024 solar storm scare, social media claimed satellites were falling from orbit. No major agency confirmed it. Wikipedia’s article on “Solar storm of 2024” simply stated: “As of February 12, 2024, no satellite losses have been confirmed by NASA, NOAA, or ESA.”

That’s it. No speculation. No drama. Just a quiet, clear statement that leaves no room for guesswork.

Wikipedia’s secret weapon: Talk pages

Beneath every Wikipedia article is a “Talk” page. This is where editors debate, argue, and collaborate. During crises, these pages become live logs of how information is verified.

When a rumor about a nuclear incident in Ukraine spread in early 2025, the Talk page for “Chernobyl Exclusion Zone” had 47 comments in 90 minutes. Editors linked to IAEA statements, compared satellite imagery from Sentinel-2, and cross-referenced local news in Ukrainian. The final consensus? No radiation spike. The rumor was based on a misinterpreted thermal image.

That entire conversation? Archived. Public. Searchable. Anyone can read it. That’s transparency in action.

Why Wikipedia works better than news sites during chaos

News outlets rush to publish. They want to be first. Wikipedia doesn’t care about being first. It cares about being right. That’s why, after the 2023 Maui wildfires, Wikipedia’s article on the fire had fewer errors than major news websites. Reuters, CNN, and AP all published early estimates of lost homes that turned out to be wrong. Wikipedia waited. When the Maui County government released official data 36 hours later, Wikipedia updated the article-and corrected every earlier version with a note.

Wikipedia doesn’t have deadlines. It has sources. And sources don’t lie. People do.

What you can learn from Wikipedia’s approach

You don’t have to be an editor to use Wikipedia’s method. Next time you see a shocking claim:

- Check if it’s on Wikipedia. If it is, read the sources at the bottom.

- If it’s not on Wikipedia, search for the same claim on trusted news sites. If only one small blog mentions it, be skeptical.

- Look for consistency. If three major outlets say the same thing, it’s likely true. If it’s only on TikTok or Reddit, it’s probably not.

- Don’t trust emotion. The most viral stories are often the least accurate.

Wikipedia’s model isn’t magic. It’s just discipline. And in a world drowning in noise, that’s the most valuable thing there is.

Does Wikipedia ever get rumors wrong during crises?

Yes, briefly. But because Wikipedia’s edits are public and reversible, errors are usually caught within hours. During the 2022 Ukraine invasion, a false claim about a missile hitting a civilian airport appeared for 11 minutes before being reverted. The edit history is still visible, so anyone can see how it was corrected. That transparency is the system’s strength.

Can anyone edit Wikipedia during a crisis?

Yes, but not always. Most pages remain open to all. But during major events, sensitive pages are locked down. Only editors with a history of trusted contributions can make changes. This prevents vandalism and ensures edits are reviewed before going live.

Why doesn’t Wikipedia use AI to fact-check rumors?

AI can’t judge context. It might think a satirical article is real, or miss that a quote was taken out of context. Human editors understand nuance. They know when a source is credible, when a video is doctored, or when a statistic is being misused. That’s why Wikipedia relies on people-not algorithms.

Are there any official Wikipedia teams that handle crises?

No official team exists. But there are hundreds of volunteer editors who monitor breaking news 24/7. Many of them are journalists, researchers, or former emergency responders. They form informal networks, sharing alerts and sources. It’s decentralized, but highly coordinated.

How does Wikipedia handle conflicting reports from different countries?

It lists them all-with sources. For example, during the 2024 Taiwan Strait tensions, Wikipedia’s article included casualty figures from Taiwan’s defense ministry, China’s state media, and independent analysts. Each was clearly labeled with its origin. The article didn’t pick a winner. It just showed what each side claimed, and where they got it from.