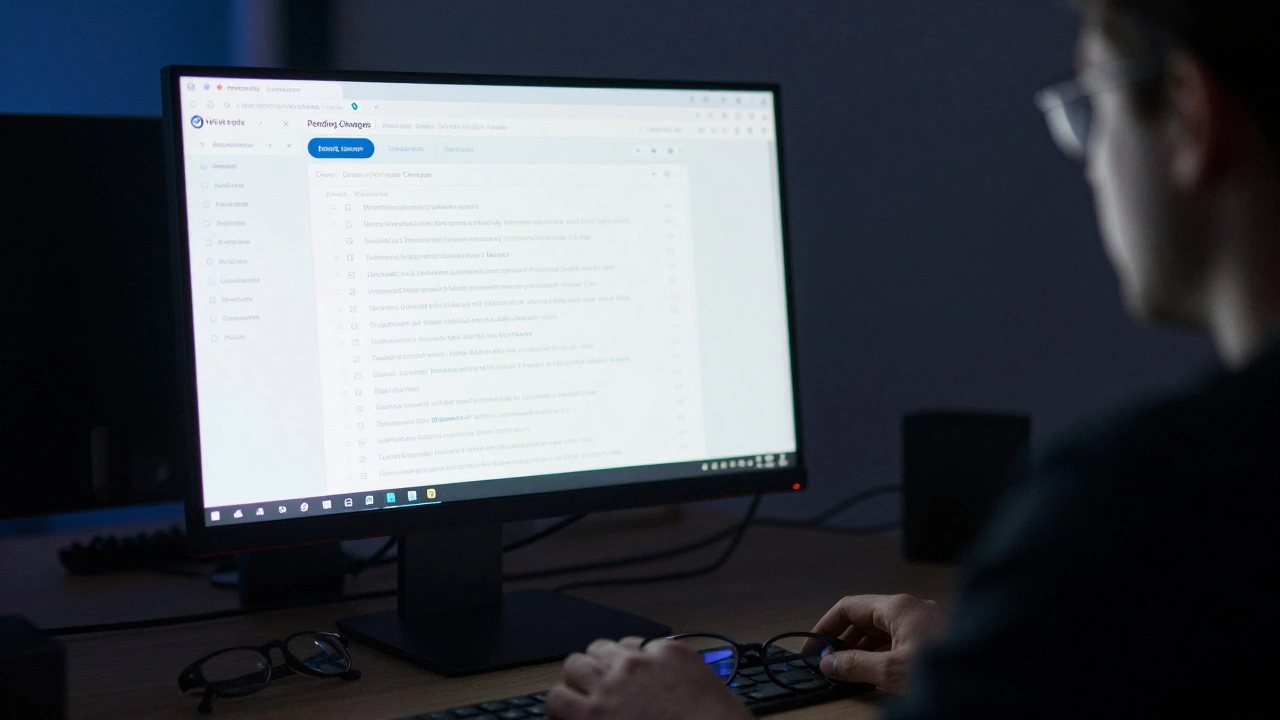

Imagine you are editing a page about a major historical event. You add a well-sourced fact, but instead of it appearing immediately, it sits in a queue. Someone else has to look at it before the world sees your contribution. This is the reality for many editors on Wikipedia, the free online encyclopedia that relies on community-driven governance. For pages protected under the Pending Changes policy, this review process is not just a formality-it is a critical line of defense against misinformation and vandalism.

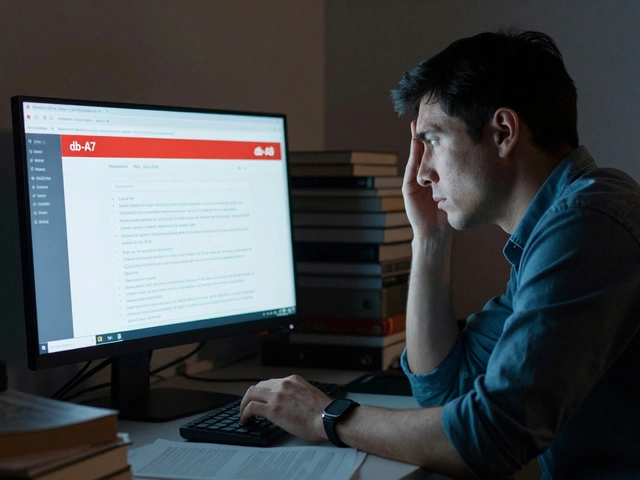

If you have been granted the right to review these changes, you hold a significant responsibility. You are no longer just an editor; you are a gatekeeper. But what exactly does that mean? How do you decide which edits to accept and which to reject? And perhaps most importantly, how do you handle the pressure of making quick decisions without stifling good contributions?

Understanding the Core Purpose of Pending Changes

To understand your role as a reviewer, you first need to understand why the system exists. The Pending Changes policy was introduced to combat vandalism and unconstructive editing on high-traffic or controversial articles. Before this policy, bad edits could remain visible for hours or even days until someone noticed them. With Pending Changes, those edits are hidden from public view until a trusted user reviews them.

This system shifts the burden from "revert after damage" to "verify before publication." It protects the integrity of the article while still allowing collaboration. However, it introduces a new challenge: editorial lag. If reviewers are too slow, the article becomes stale. If they are too strict, legitimate contributors get frustrated and leave. Your job is to find the balance between safety and openness.

The Three Pillars of Reviewer Decision-Making

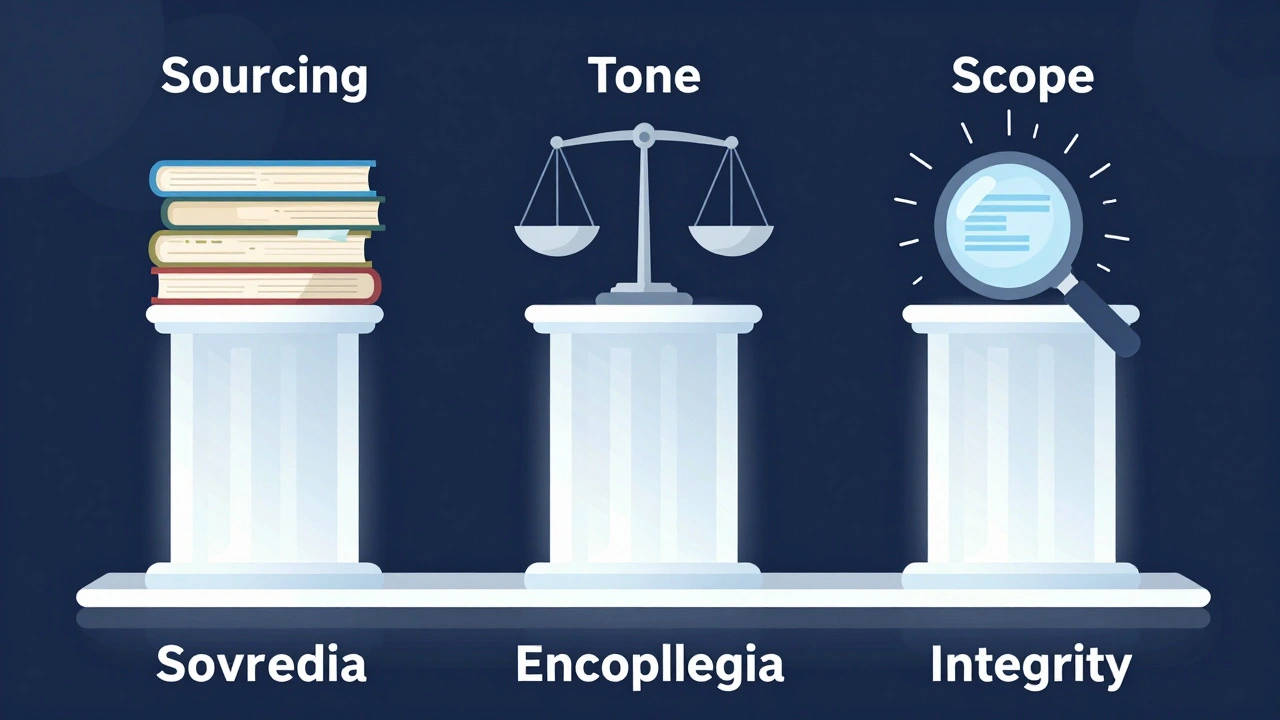

When you sit down to review a batch of pending changes, you might feel overwhelmed by the volume. Don't panic. Most decisions come down to three core criteria: sourcing, tone, and scope. These pillars help you filter through noise quickly.

- Sourcing: Does the edit include reliable references? Is the source verifiable? If an editor adds a claim like "This politician supports tax cuts," but provides no link or citation, you should flag it. Wikipedia requires verifiability. If the source is a personal blog, a social media post, or an unreliable news outlet, the edit fails this test.

- Tone: Is the language neutral? Wikipedia adheres to Neutral Point of View (NPOV). Edits that use loaded language, such as "the disastrous policy" or "the brilliant leader," violate this principle. Even if the fact is true, the phrasing must be objective. Look for adjectives that imply judgment rather than description.

- Scope: Is the information encyclopedic? Not every true statement belongs on Wikipedia. Trivia, minor details, or overly specific anecdotes often clutter articles. Ask yourself: Would a general reader care about this detail? If it doesn't add significant context to the main topic, it likely doesn't belong.

By focusing on these three areas, you can make consistent decisions without getting bogged down in subjective preferences. Remember, you are not judging the editor's character; you are evaluating the quality of the text.

Handling Common Types of Pending Edits

Not all pending changes are created equal. Some are obvious fixes, while others require careful scrutiny. Here is how to approach the most common scenarios you will encounter.

Minor Fixes and Typos: These are the easiest to approve. If an editor corrects a spelling mistake, fixes a broken link, or adjusts formatting, accept it immediately. Do not waste time reviewing trivial changes unless they seem suspiciously numerous, which might indicate bot activity.

New Content Additions: These require more attention. Check if the new paragraph flows logically with the existing text. Does it contradict other sections? If so, flag it for discussion. Also, verify that the sources cited actually support the claims made. Sometimes editors cite a source that mentions the topic only in passing, which is insufficient evidence.

Deletions and Reversions: Be cautious here. If an editor removes a large chunk of text, ask why. Was it poorly sourced? Or were they engaging in edit warring-trying to force their viewpoint by deleting opposing arguments? If the deletion seems motivated by disagreement rather than quality improvement, revert it and leave a polite note explaining your reasoning.

Biographical Information: Articles about living persons (BLP) have stricter standards. Any negative or controversial claim about a living person must be backed by high-quality, independent sources. If an edit adds unverified gossip or allegations, reject it outright. Protecting individuals from defamation is a priority.

Avoiding Common Reviewer Pitfalls

Even experienced reviewers make mistakes. Being aware of common pitfalls can help you avoid them and maintain trust within the community.

Over-rejecting Good Faith Edits: New editors often make honest mistakes. They might forget to add a reference or use slightly informal language. Instead of rejecting their entire contribution, consider accepting the edit and leaving a friendly comment guiding them on how to improve next time. Harsh rejection discourages participation. Wikipedia thrives on welcoming newcomers, not shutting them out.

Ignoring Context: Always read the surrounding text. An edit might look fine in isolation but create contradictions when viewed in context. For example, adding a sentence about a company's revenue growth might clash with a later section detailing recent layoffs. Ensure the article tells a coherent story.

Bias Creep: We all have biases. As a reviewer, you must actively check yours. If you strongly disagree with a political viewpoint expressed in an edit, ensure your rejection is based on lack of sourcing or non-neutral tone, not because you dislike the idea. Personal opinion has no place in the review process. Stick to the rules.

Delaying Decisions: Leaving edits in limbo for weeks hurts the article. If you are unsure, don't leave it hanging. Flag it for another reviewer or move it to the talk page for discussion. Stale queues frustrate both readers and editors.

| Edit Type | Action | Reasoning |

|---|---|---|

| Typo fix | Accept | No risk, improves readability |

| Unsourced claim | Reject/Flag | Violates verifiability policy |

| Non-neutral language | Reject/Edit | Violates NPOV policy |

| Major deletion | Revert/Discuss | Potential edit warring or content loss |

| BLP controversy | Reject if unsourced | High risk of defamation |

Communicating Effectively with Editors

Reviewing is not just about clicking buttons; it is about communication. When you reject an edit, you are sending a message to another human being. How you phrase that message matters.

Always leave a clear, constructive reason for your decision. Vague comments like "not acceptable" or "bad edit" are unhelpful. Instead, say: "Please add a reliable source for this claim," or "The language here is too promotional; please rephrase to be neutral." Specific feedback helps editors learn and improve.

If you are unsure about a complex edit, invite discussion. Use the article's talk page to ask questions or propose alternatives. Collaboration often leads to better outcomes than unilateral decisions. Remember, you are part of a community, not a solitary judge.

Also, recognize when to step back. If you find yourself repeatedly clashing with a particular editor, take a break. Emotions can cloud judgment. Let someone else handle the review, or seek mediation. Maintaining a positive working environment is essential for long-term productivity.

The Impact of Your Role on Wikipedia’s Health

Your work as a reviewer directly impacts the reliability of Wikipedia. Every accepted edit strengthens the encyclopedia; every rejected bad edit prevents misinformation from spreading. In a world where fake news travels fast, your diligence helps preserve truth.

Moreover, your actions set an example. By following policies consistently and treating editors with respect, you model the behavior you want to see in others. Newcomers watch how experienced users interact. If they see fairness and clarity, they are more likely to stay and contribute positively.

Finally, remember that the system relies on volunteers. There is no central authority dictating every decision. You have autonomy, but also accountability. Use that power wisely. Stay informed about policy updates, engage in community discussions, and continuously refine your skills. The health of Wikipedia depends on people like you doing the hard work behind the scenes.

What happens if I accidentally accept a bad edit?

Don't worry. Mistakes happen. Simply revert the edit and leave a note explaining the error. Other reviewers or automated tools may catch it later. Learning from mistakes is part of the process.

How do I know if a source is reliable?

Look for established publishers, academic journals, or reputable news outlets. Avoid self-published material, blogs, or forums. If in doubt, check Wikipedia's list of reliable sources or ask on the talk page.

Can I edit the article myself after reviewing?

Yes, but be careful. Editing right after reviewing can appear biased. It's better to separate these roles. If you make changes, document them clearly on the talk page.

What should I do if I suspect vandalism?

Revert the edit immediately and report it using the appropriate tools. If the pattern continues, consider blocking the user or seeking admin assistance. Speed is key to minimizing damage.

Is there a time limit for reviewing pending changes?

While there is no strict deadline, aim to review within 24-48 hours. Delays cause frustration and reduce the effectiveness of the protection system. Prioritize urgent or high-visibility articles.