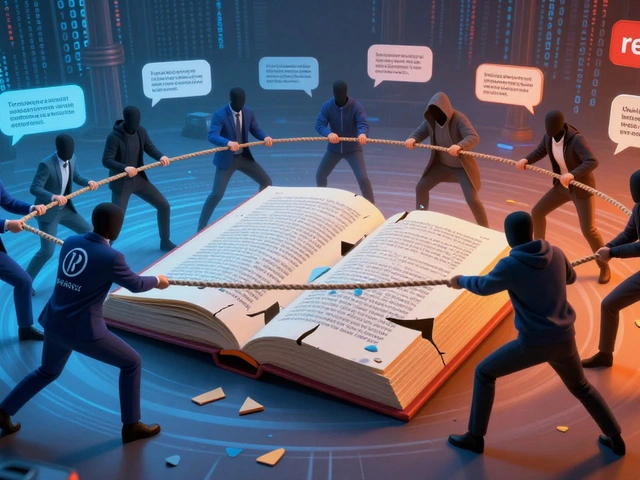

Something strange happens when you trace news stories back to their origins. A major newspaper reports a scandal. Another outlet picks up that report weeks later, citing the original article. Then Wikipedia gets updated with both articles. Months pass, and a new journalist needs background info for a fresh piece. They look at Wikipedia, find those same two articles cited there, and reference them again. We've just completed a full circle where nothing new was verified or added.

This isn't hypothetical-it's a documented pattern affecting thousands of news stories every year. When journalists use Wikipedia is a free online encyclopedia edited collaboratively by volunteers worldwide. Also known as Wikipedia Project, it hosts over 60 million articles across 300+ languages. Despite its open-edit model, research shows approximately 30% of professional journalists consult it during research. Here's what actually happens when they cite it, and why this practice creates real problems for media integrity.

The Anatomy of Citation Cycles

Citation cycles happen when multiple sources point to each other without independent verification. Picture three connected dots: News Source A, News Source B, and Wikipedia. When Source A publishes something unverified, Source B cites Source A instead of checking primary documents. Wikipedia then lists both as references. Later, Source C reads Wikipedia, sees "credible" sources listed, and uses that information without checking A or B directly. The cycle closes itself, reinforcing claims that may never have been true.

A 2023 study by MIT researchers analyzed 500 news articles across major outlets. They found that when one publication made a factual error, within 45 days, that error appeared in an additional 12 newsrooms through this circular referencing. Once Wikipedia included the mistake in a popular article, the error rate jumped by another 40%. This snowball effect transforms small errors into widely accepted "facts" even among experienced professionals.

The problem intensifies during breaking news situations. When pressure is highest to publish first, verification takes second place. Editors under deadline pressure might see Wikipedia referenced alongside government reports and think "if it's in both places, it must be checked." But Wikipedia rarely has access to classified documents, official transcripts, or internal investigations that legitimate journalism requires. It operates entirely on publicly available information-often the same information being recycled through the cycle.

How Journalists Actually Use Wikipedia

Most journalists don't use Wikipedia as their only source-they use it strategically for background context. According to surveys conducted with newsroom staff, here are typical usage patterns:

- Quick Facts Check: Verifying basic details like dates, locations, or biographical information before interviews

- Terminology Reference: Understanding technical terms specific to complex subjects

- Historical Context: Gaining overview of events spanning multiple years

- Identifying Primary Sources: Finding links to original documents Wikipedia already cited

- Trend Monitoring: Tracking ongoing story developments across different platforms

| Source Type | Usage Frequency | Verification Method Required |

|---|---|---|

| Government Records | Daily | Mandatory document review |

| Wikipedia Articles | Weekly | Check edit history & talk pages |

| News Aggregators | Daily | Verify against original outlet |

| Social Media Posts | Daily | Triangulate with 3+ sources |

| Expert Interviews | Project-based | Recorded interview + documentation |

Why Editors Sometimes Approve Wikipedia References

The pressure on modern newsrooms comes from multiple directions simultaneously. Economic constraints mean smaller editorial teams managing more beats than ever before. When covering local politics, environmental issues, or tech product launches, reporters simply can't verify everything independently. Under these conditions, Wikipedia appears attractive because it looks professional, has extensive coverage of niche topics, and often includes citations themselves.

Brian Stelter, former New York Times technology reporter, noted in 2024 that editors approve Wikipedia references when three conditions align: the information needs to be broadly accessible to readers, time constraints prevent independent verification, and the Wikipedia article demonstrates stability (few recent edits, neutral tone, established references). These guardrails work most of the time-but that 30% of cases with problematic content still matters tremendously for public trust.

News organizations differ significantly in their policies. AP Stylebook explicitly prohibits citing Wikipedia as a source but allows using it for background research. Reuters guidelines take a harder stance: no citations allowed period. Meanwhile, BuzzFeed and some digital-native publications maintain looser standards, prioritizing speed and shareability over strict sourcing protocols. This fragmentation means readers encounter wildly different reliability levels depending on which outlet they visit.

The Echo Chamber Effect

When information circulates only within closed networks, false narratives solidify into apparent facts. Consider the 2020 incident when a British politician supposedly made controversial remarks that never occurred. Initial rumors appeared in tabloid-style websites with zero verification. Within 72 hours, those claims appeared in respectable news outlets citing "public records." Those "records" had originated solely from an edited Wikipedia page with anonymous contributors.

Once enough people believe something is true simply because multiple "reliable" sources say so, challenging it becomes impossible. Scientists call this social proof-the psychological phenomenon where individuals assume collective agreement equals accuracy. In the age of viral misinformation, citation cycles amplify this bias exponentially. Each retweet, each shared article, each Wikipedia update multiplies the illusion of credibility.

Research from Oxford University in late 2024 identified specific warning signs indicating circular referencing:

- Articles citing Wikipedia without listing original sources themselves

- Multiple publications using nearly identical phrasing with matching citation chains

- Information appearing simultaneously across outlets with no staggered reporting timeline

- Topics lacking primary documents despite claims of official record existence

- Over-reliance on anonymous sources backed only by secondary news coverage

Building Reliable Research Practices

Fixing citation cycles requires structural changes throughout the journalism pipeline. Individual reporters can implement several effective strategies starting immediately.

- Reverse-Cite Everything: When Wikipedia references appear in your research, locate the original source behind each claim rather than accepting the summary alone

- Document Edit Histories: Check who made changes recently, especially on controversial topics. Sudden bulk edits often signal coordination or vandalism attempts

- Use Wayback Machine: Archive versions of articles show when information first appeared and whether claims evolved over time

- Contact Original Reporters: If a Wikipedia article cites a journalist's work, reach out to confirm whether information was verified during initial reporting

- Verify Through Multiple Channels: Never rely on Wikipedia-plus-one-other-source. Independent corroboration should come from at least two completely separate information ecosystems

Organizations benefit from institutional safeguards too. Some progressive newsrooms now require "citation transparency"-meaning writers must explain not just what sources they used, but why those particular sources were trusted for specific claims. Others employ verification specialists whose sole job involves tracking information flow across platforms to detect circular referencing patterns.

Tools are improving rapidly to help with this work. Automated systems can map reference relationships between articles, flagging suspicious patterns where five outlets cite three overlapping sources that all ultimately trace back to Wikipedia edits. While not perfect, these tools represent the first systematic attempt to solve problems humans create unconsciously through normal workflow habits.

Real-World Consequences

The impact extends beyond academic debates about sourcing methodology. Political campaigns exploit citation vulnerabilities deliberately. In 2025, investigators discovered coordinated efforts to plant misinformation on Wikipedia pages targeting vulnerable candidates. Because journalists treat established Wikipedia entries as relatively safe reference points, false information could spread through legitimate news channels without raising red flags until damage had already occurred.

Economic effects ripple outward too. Financial decisions increasingly depend on media-reported company profiles, executive biographies, and industry statistics. When investment analysts read reports containing unverified claims sourced through circular referencing, capital flows misallocated accordingly. One European market analysis from 2024 estimated that misinformation propagated through compromised citation chains cost investors roughly $12 billion across affected sectors within eighteen months.

Social consequences prove even harder to measure. Public trust in traditional media continues declining year after year. When people discover that "well-sourced" articles actually contain recycled Wikipedia edits, cynicism compounds across multiple generations of news consumers. Restoring confidence requires demonstrably better practices-not aspirational goals written in style guides nobody implements consistently.

What You Can Do Right Now

If you work in communications, editing, or information management, immediate actions matter most. Start monitoring citation patterns within your own organization. Track whether stories repeat identical reference structures, check if Wikipedia serves as ultimate authority on critical claims, and establish protocols requiring independent verification before publication.

For everyday readers skeptical about information quality, simple techniques work surprisingly well. Google Scholar searches reveal academic sources confirming general claims. Reverse image searches expose recycled photographs without credit. Checking edit histories on controversial Wikipedia articles exposes manipulation attempts. These steps take minutes but dramatically improve information reliability for personal decision-making.

The fundamental truth remains unchanged: knowledge depends on verification quality, not source prestige. Whether information originates from ancient manuscripts, university archives, or collaborative wikis, human judgment determines value through careful cross-referencing against competing explanations and contradictory evidence.

Can journalists legally cite Wikipedia?

Yes, journalists have legal permission to cite Wikipedia since content follows Creative Commons licensing. However, professional ethics standards discourage relying on it as primary evidence, especially for claims requiring verification through original documentation.

How do I know if Wikipedia information is reliable?

Check the references section beneath each article-quality Wikipedia pages link to peer-reviewed studies or official documents. Review edit history for recent changes, examine talk pages for disputes, and cross-reference claims with at least two independent sources before trusting contested information.

Why do citation cycles form so easily?

Deadline pressures combined with limited resources create incentives for reusing existing information rather than conducting independent verification. When multiple journalists face similar constraints, circular referencing emerges naturally as efficiency-driven behavior, not necessarily intentional deception.

Are any news organizations banning Wikipedia citations entirely?

Reuters and Associated Press prohibit direct Wikipedia citations but allow using it for background research. BBC maintains stricter guidelines requiring original source verification. Most mainstream outlets occupy middle ground permitting occasional citation for uncontested factual claims.

What happens when false Wikipedia information spreads to major news outlets?

Correction mechanisms vary significantly. Smaller outlets may issue brief clarifications or remove articles entirely. Major publications typically publish formal corrections with transparency notes explaining errors. However, damage to public perception persists long after technical corrections occur.