Tag: Wikipedia vandalism

Using ORES and Machine Learning to Flag Risky Wikipedia Edits

ORES uses machine learning to detect vandalism on Wikipedia by analyzing edit patterns in real time. It helps human editors prioritize risky changes, reducing the time harmful content stays online. Trained on decades of edit history, it catches 80%+ of vandalism faster than humans alone.

Notable Vandalism Cases: When Wikipedia Gets It Wrong

Wikipedia is a powerful tool, but it's not immune to sabotage. From fake biographies to political lies, here are real cases where misinformation slipped through - and how to spot it before you believe it.

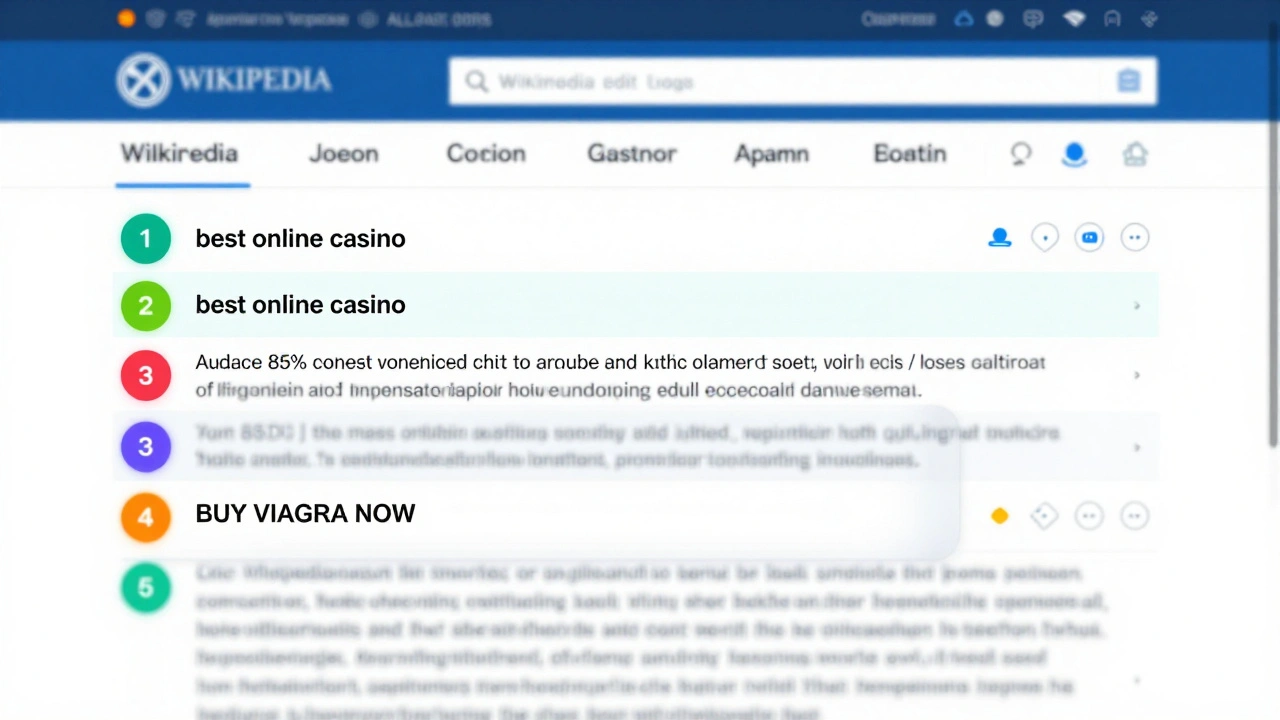

AbuseFilter Examples on Wikipedia: Building Effective Rules to Stop Vandalism

AbuseFilter on Wikipedia uses smart rules to stop vandalism automatically. Learn real examples of effective filters, how to build your own, and why human reviewers still matter.

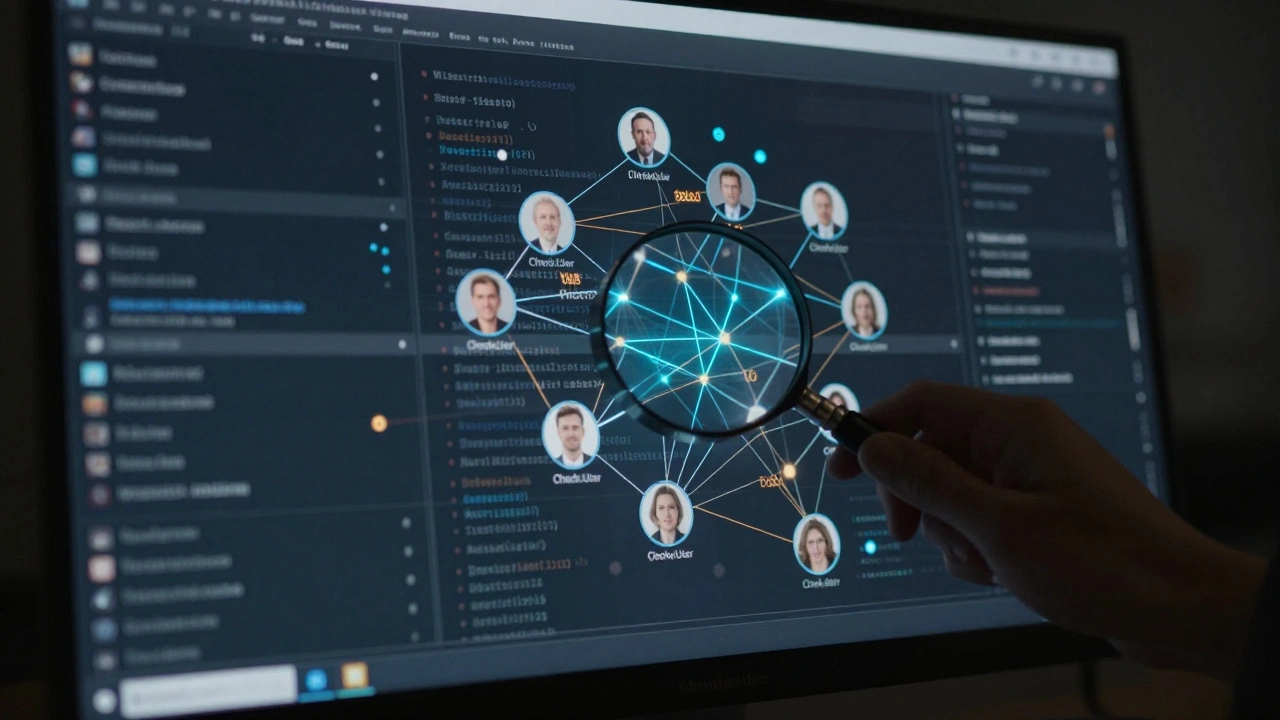

CheckUser Workflow on Wikipedia: How Editors Detect Vandalism with Data Limits

CheckUser is a critical but limited tool used by trusted Wikipedia editors to detect coordinated vandalism by linking accounts through technical data. It doesn't reveal identities but helps stop repeat offenders by uncovering hidden connections behind edits.

How to Detect and Report COI and Undisclosed Paid Editing on Wikipedia

Learn how to spot and report undisclosed paid editing and conflict of interest on Wikipedia. These biased edits undermine public trust - but anyone can help fix them.

Wikipedia Vandalism: Detection, Reversion, and Protection Levels Explained

Wikipedia combats vandalism with bots and patrols. ClueBot NG catches attacks in seconds; rollback tools revert edits in minutes. Protection levels range from semi-protected to fully locked pages, keeping content reliable.

Sockpuppet Detection and Prevention on Wikipedia: Key Methods

Wikipedia combats sockpuppet accounts through technical tools and volunteer vigilance. Learn how detection works, what signs to watch for, and why this matters for online trust.

How Wikipedia Handles Vandalism Conflicts and Edit Wars

Wikipedia handles vandalism and edit wars through a mix of automated bots, volunteer moderators, and strict sourcing rules. Conflicts are resolved by community consensus, not votes, and persistent offenders face bans. Transparency and accountability keep the system working.