Archive: 2026/03 - Page 4

Reducing Hallucinations in AI: How Wikipedia Citations Keep AI Answers Accurate

AI often makes things up-but using Wikipedia citations helps ground its answers in real sources. This approach cuts hallucinations by over 50% and builds trust in factual AI responses.

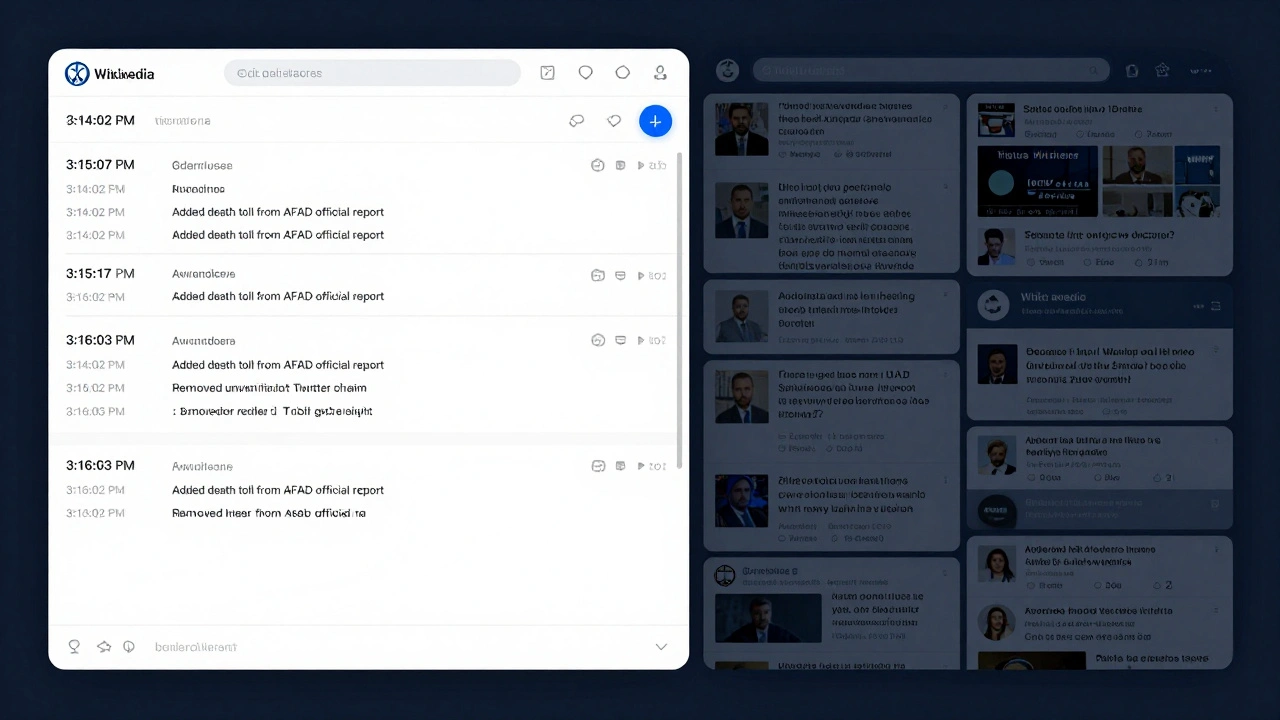

How Wikipedia Handles Time-Stamping and Edit Summaries During Breaking News Events

During breaking news, Wikipedia relies on precise time-stamping and clear edit summaries to keep information accurate and transparent. Learn how volunteers and automated tools work together to update pages in real time-without sacrificing reliability.

Global Knowledge Equity: How to Close the Content and Access Gaps

Global knowledge equity means ensuring everyone, everywhere, can access and contribute to the information they need. This article explores why content gaps exist and how local communities are building their own solutions - from offline libraries to AI trained in Indigenous languages.

WikiGap Events: Announcements and Ambassador Resources

WikiGap events are global initiatives to close the gender gap on Wikipedia by creating and improving articles about women. Learn how ambassadors organize events, access resources, and make lasting changes to who gets remembered in history.

Wikipedia vs Grokipedia: Trust, Accuracy, and Governance Side by Side

Wikipedia and Grokipedia offer different approaches to online knowledge: one built by humans, the other by AI. This comparison reveals how transparency, accuracy, and governance shape trust in digital encyclopedias.

Policy Debates About AI-Generated Content on Wikipedia

Wikipedia's policy on AI-generated content is under intense debate as automated tools flood the encyclopedia with synthetic text. Editors struggle to balance accuracy, transparency, and the core principle that knowledge must be human-curated.

What Happens When Wikipedia Policies Conflict: NPOV vs. Verifiability Case Studies

When Wikipedia's NPOV and Verifiability policies clash, editors rely on sources, not opinions. Real case studies show how neutral reporting wins over bias - and why this matters for online truth.

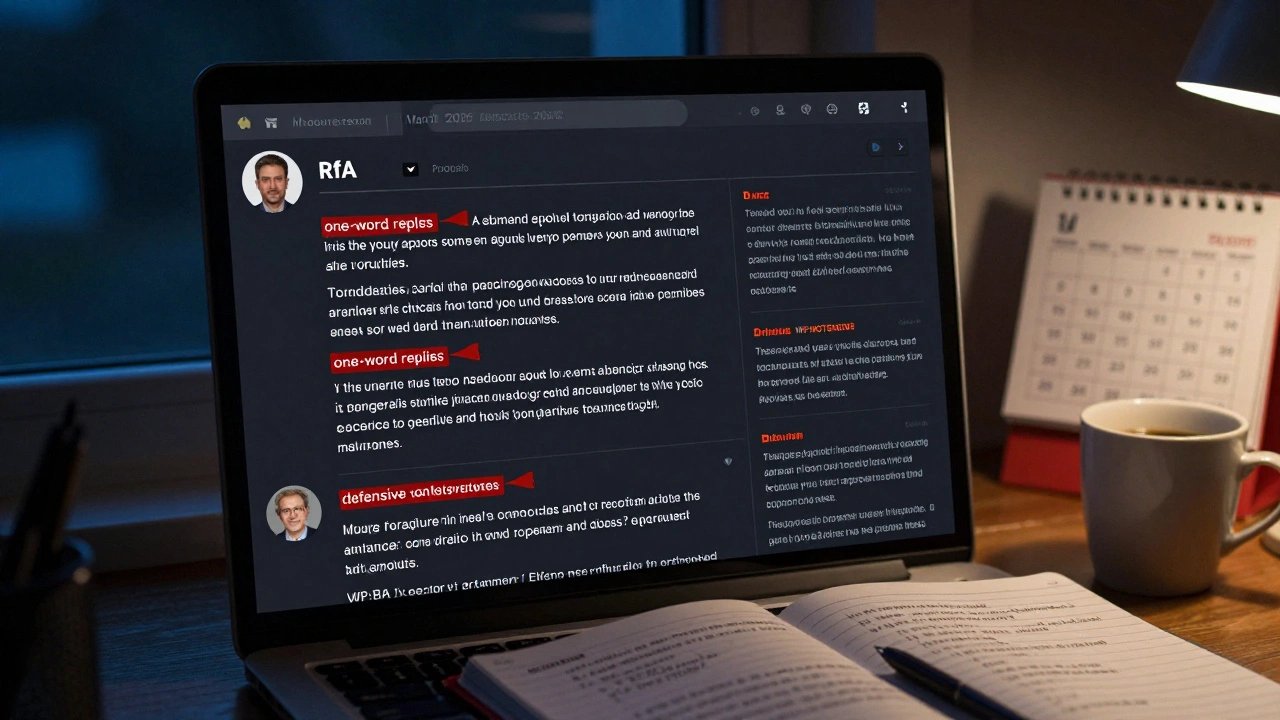

RfA Trends in 2025: Success Rates and Community Expectations

In 2025, Wikipedia's RfA success rate has dropped to 17% as community expectations rise. Admins now need conflict resolution skills, cultural awareness, and emotional maturity-not just edit counts. Learn what really matters today.

Off-Wiki Canvassing and How It Undermines Wikipedia Consensus

Off-wiki canvassing undermines Wikipedia's consensus by letting outside groups influence edits through social media and other platforms. This violates the core principle of neutral, evidence-based collaboration and erodes trust in the encyclopedia.

Off-Wiki Canvassing and How It Undermines Wikipedia Consensus

Off-wiki canvassing undermines Wikipedia's consensus by manipulating edits from outside the platform. It erodes trust, triggers edit wars, and threatens the integrity of one of the world's most trusted information sources.

Off-Wiki Canvassing and Its Impact on Wikipedia Consensus

Off-wiki canvassing undermines Wikipedia's consensus by allowing external influence on edits. This practice distorts collaboration, erodes trust, and drives away contributors. Learn how it works, why it's banned, and what you can do to protect Wikipedia's integrity.

Affiliations Committee Changes and Impact on Wikipedia Communities

Changes to Wikipedia's Affiliations Committee in 2025 reshaped how global volunteer groups are supported, leading to faster approvals, better funding, and stronger representation from the Global South. The shift is helping revive editor growth in underrepresented regions.