Tag: Wikipedia moderation - Page 2

What Wikipedia Administrators Do: Roles and Responsibilities Explained

Wikipedia administrators are unpaid volunteers who maintain the site by enforcing policies, handling vandalism, and mediating disputes. They don't decide what's true-they ensure rules are followed.

Bans of High-Profile Wikipedia Editors: What Led to Them

High-profile Wikipedia editors have been banned for abuse of power, sockpuppeting, and paid editing. These cases reveal how the community enforces fairness-even against its most experienced members.

Living Policy Documents: How Wikipedia Adapts to New Challenges

Wikipedia's policies aren't static rules-they're living documents shaped by community debate, real-world threats, and constant adaptation. Learn how volunteers keep the encyclopedia accurate and trustworthy.

How Wikipedia Enforces Behavioral Policies: Civility, Harassment, and Blocks

Wikipedia enforces civility and fights harassment through volunteer moderation, public blocks, and transparent policies. Learn how editors are warned, blocked, and held accountable without corporate oversight.

Autopatrolled Status on Wikipedia: What It Takes and What It Means

Autopatrolled status on Wikipedia lets trusted editors skip manual review of their changes. Learn the criteria, responsibilities, and why this quiet system keeps the encyclopedia running.

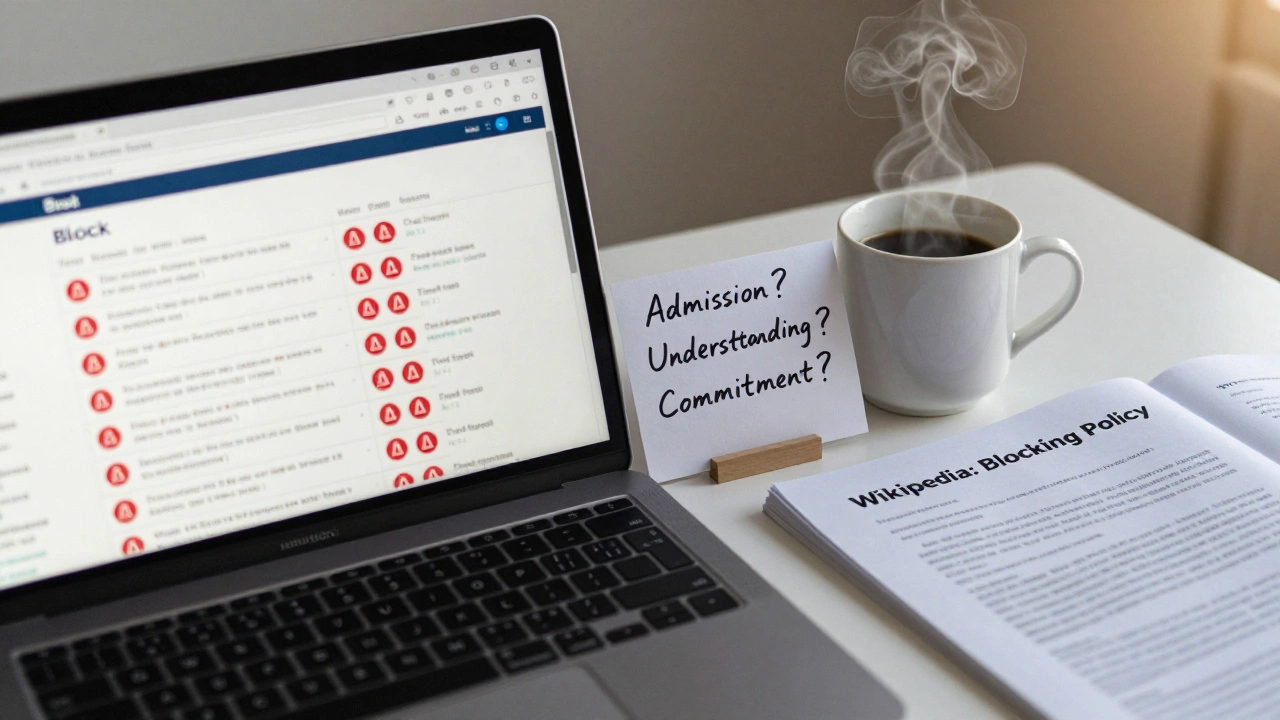

How Wikipedia Administrators Evaluate Unblock Requests

Wikipedia administrators must carefully evaluate unblock requests by reviewing user history, assessing genuine change, and applying policy with fairness. Learn how to distinguish between sincere apologies and repeated disruption.

Governance Models: How Wikipedia Oversees AI Tools

Wikipedia uses AI to help edit articles, but strict community-led oversight ensures accuracy and fairness. Learn how volunteers, policies, and transparency keep AI in check on the world’s largest encyclopedia.

How Wikipedia Resolves Editorial Disagreements: The Real Process Behind Editorial Consensus

Wikipedia resolves editorial disagreements through consensus, not votes. Editors use policies, talk pages, and mediation to agree on accurate, sourced content. Learn how real people build reliable information together.

How Wikipedia Handles Vandalism Conflicts and Edit Wars

Wikipedia handles vandalism and edit wars through a mix of automated bots, volunteer moderators, and strict sourcing rules. Conflicts are resolved by community consensus, not votes, and persistent offenders face bans. Transparency and accountability keep the system working.

Blocking Policy Case Studies from Wikipedia Administrator Noticeboards

Real case studies from Wikipedia's administrator noticeboards show how blocks are decided, appealed, and refined. Transparency, policy, and community oversight drive moderation-not secrecy.

How to Edit Filters and Manage Pending Changes on High-Risk Wikipedia News Articles

Learn how edit filters and pending changes protect Wikipedia's high-risk news articles from vandalism and misinformation. Understand how to get your edits approved and what to do when they're rejected.

How to Monitor Wikipedia Article Talk Pages for Quality Issues

Monitoring Wikipedia talk pages helps identify quality issues before they spread. Learn how to spot red flags, use tools, and contribute to better information across the platform.